Since adopting AI coding tools, your team's development velocity has probably increased. But have you noticed the quiet surge of pressure building on your reviewers?

AI-Generated PRs Make No Sense

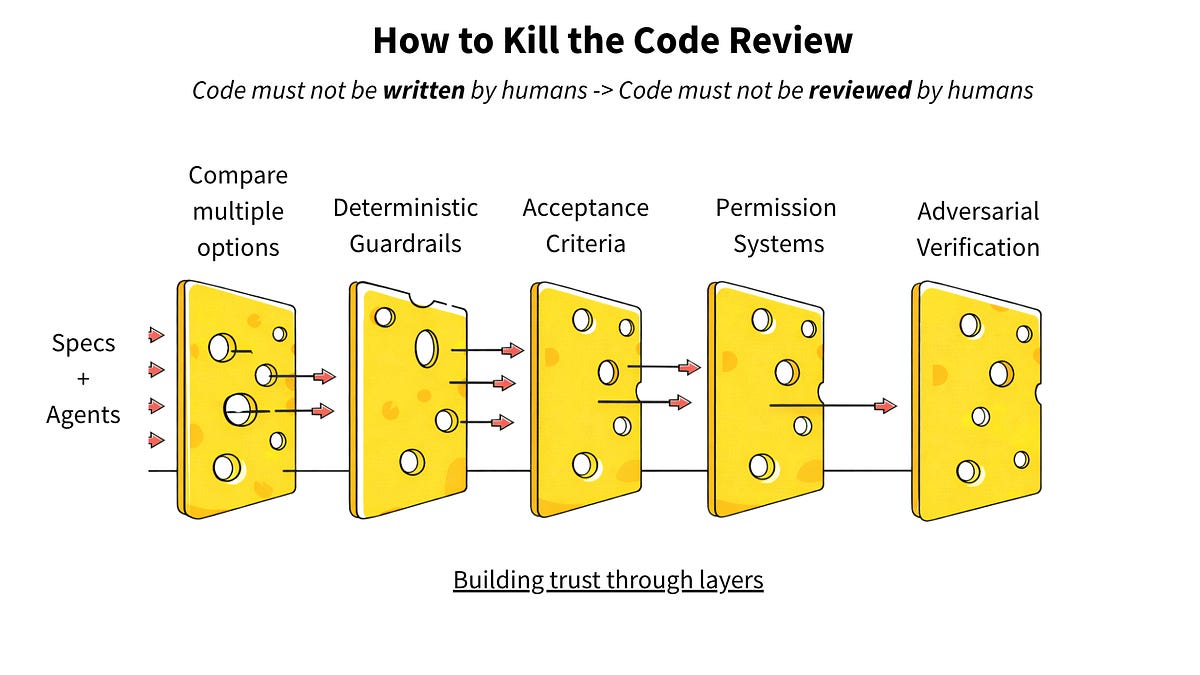

A March 2026 article on Latent.Space titled "How to Kill the Code Review" confronts the structural challenges facing code review in the AI era head-on.

According to Faros.ai data cited in the article, teams with high AI adoption complete 21% more tasks and merge 98% more pull requests — but PR review time increases by 91%.

In other words, as AI generates code at scale, reviewers must read diffs at an unprecedented pace.

But the real problem is not just volume. AI-generated PRs frequently lack any description of why the change was made.

Comparing Real PRs

Let's look at actual pull requests side by side.

PR Without Skill (Raw Claude Code Output)

Summary

- Extend validatePriority() to accept string | number,

auto-converting numeric 1-4 to string equivalents

- Fix TOML help document: change priority from

type = "number" to type = "string"

- Fix false positive in filter-test-output.js

when test names contain "Error"At first glance, it looks well-organized. But there is no explanation of why string | number was necessary. Was it for compatibility with an external API? A response to a user bug report? A forward-looking design change? The reviewer has no choice but to guess from the diff alone.

PR With sqlew Skill Applied

Decision: PR ADR enforcement via PreToolUse Hook

(SKILL.md auto-discovery lacks enforcement power)

- src/cli/hooks/pr-adr.ts: New hook — reads stdin JSON,

checks for gh pr create, validates ADR markers

(## Decision: / ## Constraint: / ## Other Changes),

blocks with template if missing

- src/cli.ts: Add pr-adr command dispatch and help entry

Other Changes

- No files in this PR unrelated to the decision aboveThe difference is unmistakable. The why — the Decision — is structured at the top of the PR. Each file change is mapped to that decision, and the "Other Changes" section explicitly confirms there are no unrelated modifications.

Reviewers Should Read "Intent," Not "Code"

The Latent.Space article argues for shifting the center of gravity in code review from reading lines of code to verifying intent. The human judgment that matters is whether the right problem is being solved under the right constraints — not tracing through 500-line diffs one line at a time.

sqlew's new Skill "sqlew-pr-adr" implements this philosophy as tooling. It operates as a Claude Code PreToolUse Hook, automatically validating that a Decision section is present in the PR body when gh pr create runs. If it's missing, the hook presents a structured template and blocks the PR from being created.

The same sqlew-pr-adr Skill is also available for OpenAI Codex. Since Codex lacks a Hooks mechanism, the AI follows the Skill's workflow to automatically compose decision-structured PR descriptions during PR creation.

How It Works

The core of this feature is sqlew's Skill "sqlew-pr-adr." It extracts keywords from the diff, performs a reverse lookup against decisions accumulated in sqlew, and groups the PR description by decision.

- Diff analysis: Extracts changed files, function names, and module names from

git diff - Decision reverse lookup: Calls sqlew's

suggestwith extracted keywords to retrieve related decisions - Structured template: Generates a PR description that groups file changes under each decision

- Stateless design: No state management required — validates the PR body only

Setup

For Claude Code: If you have installed sqlew, Just install the sqlew plugin. The PreToolUse Hook is automatically configured to enforce decision documentation on gh pr create.

claude plugin marketplace add sqlew-io/sqlew-plugin

claude plugin install sqlewFor OpenAI Codex: Clone the sqlew-codex-skills repository from GitHub and copy the files to your Codex directory.

git clone https://github.com/sqlew-io/sqlew-codex-skills

cp sqlew-codex-skills/copy_to_codex_dir/* ~/.codexThe Skill Alone Isn't Enough: The Knowledge Base Is What Matters

However, simply installing the sqlew-pr-adr Skill won't deliver these results on its own.

What the Skill does is reverse-lookup decisions accumulated in sqlew and structure them into the PR description. If there is no knowledge base of decisions and constraints to reference, the Skill has nothing to work with — and the result is a PR with only an "Other Changes" section.

The real power comes from integrating sqlew into your daily development workflow: recording design discussions from Plan Mode as decisions, accumulating coding conventions as constraints, and building up the knowledge base over time. Only with this foundation does the "why" behind each change emerge naturally in PR descriptions.

When change intent is structured in PRs, reviewers can focus on the essential judgment: "Is this decision sound?" and "Does the change scope align with the stated decision?" Instead of reading AI-generated diffs line by line, review becomes a verification of alignment between intent and implementation.

The sqlew-pr-adr Skill is the first step toward transforming the AI coding review experience from endurance test to informed judgment.

References

- Jain, A. (2026). "How to Kill the Code Review" — Latent.Space — https://www.latent.space/p/reviews-dead